Rare genetic disorders can be identified by analysing images of people’s faces using a smartphone app powered by artificial intelligence – which has now been proven to be significantly more accurate than doctors.

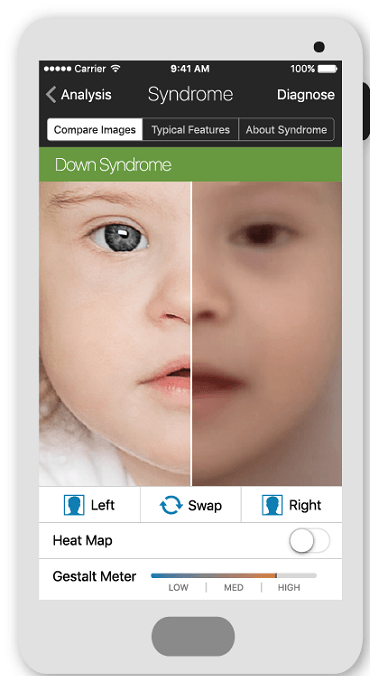

The Face2Gene app creates a list of ten genetic mutations it believes a patient may have – and which might not be easily recognised by doctors – so they can create personalised care plans based on the information.

It was created by US biotech company Facial Dysmorphology Novel Analysis (FDNA), which believes the technology will help prevent patients from enduring lengthy waits and making repeated clinic visits before they are finally diagnosed – giving them a better chance of receiving the right treatment early on.

FDNA has now published results from a “milestone study”, in which it found facial analysis managed to identify the ten most frequent disorders of this type with 91% accuracy across 502 images.

For conditions such as Noonan syndrome, it was 44% more accurate than human clinicians.

FDNA chief technology officer Yaron Gurovich, who led the research, told Compleo: “It’s a very long process to arrive at the right diagnosis, or even a diagnosis at all, when dealing with rare disorders.

“Using our AI approach as part of the clinical workflow, clinicians are able to shorten this process significantly.”

Face2Gene is the first app to identify rare genetic disorders patients from just photos

FDNA, which offers facial recognition tools to clinical geneticists, created Face2Gene using its own AI software, called DeepGestalt.

The company, based in Boston, Massachusetts, trains and improves the ability of this deep learning algorithm to identify facial characteristics associated with particular hereditary conditions.

It claims DeepGestalt is the first ever digital health tech to identify rare genetic disorders in individuals based on facial features alone.

Mr Gurovich said: “Our approach is the first to introduce the ability to analyse phenotypic information in a way where it can be used in a real clinical setting.

“In the latest study, we focused on the facial analysis aspect of our application and demonstrated how it is successfully being used in the clinical setting.

“Additionally, we outline how this approach outperforms previous methods, including human experts in three separate experiments.”

The deep learning system was trained on a new dataset of more than 17,000 facial images representing more than 200 genetic disorders.

Using the data, DeepGestalt learned to look for distinctive facial features associated with specific disorders.

In all three experiments, the results proved to outperform clinicians’ ability to identify disease.

FDNA chief medical officer Dr Karen Gripp said: “This is a long-awaited breakthrough in medical genetics that has finally come to fruition.

“With this study, we’ve shown that adding an automated facial analysis framework, such as DeepGestalt, to the clinical workflow can help achieve earlier diagnosis and treatment, and promise an improved quality of life.”

How DeepGestalt AI system outperformed geneticists

According to Mr Gurovich’s team, the “brain-like neural network” proved to be very reliable and worked better than doctors at identifying a range of genetic syndromes during each trial.

Its best performance consisted of the AI system correctly distinguishing 64% of the cases between different sub-types of the genetic disorder Noonan syndrome – a condition causes a wide range of distinctive features and health problems, such as unusual facial features, short stature, heart defects, and skeletal malformations.

This outcome was significantly better than clinicians in previous studies, who identified the disease correctly in only 20% of the cases by looking at images of people with Noonan syndrome.

Launched in 2014, DeepGestalt is now used by 70% of the world’s geneticists across more than 2,000 clinical sites in 130 countries.

But the Face2Gene app it powers has only recently started to take hold as a means to diagnose people with rare conditions as it relies on cloud sharing between doctors, who are the only people with access to the app, to increase knowledge on various conditions.

Each time a geneticist confirms the diagnosis of an uploaded photo, it strengthens the algorithm as the app incorporates the information into its database.

Mr Gurovich said: “One common use case is when a patient visits a clinical geneticist, who will use our mobile app Face2Gene to take facial photos of the patient and add additional information such as their own clinical notes.

“The results are immediate and can indicate potential syndromes for the clinician to consider in their overall diagnosis.

“After the visit, the clinician will work with our web version of Face2Gene to add additional data to refine the results, while having access to various informational sources, such as the London Medical Database.”

How AI detects rare genetic disorders

Following an image upload to the Face2Gene platform, the DeepGestalt algorithm examines “landmarks” on the patient’s face – the shape of their face, eyes, nose and mouth, for example – and then splits the image into regions.

The tech then assesses each of these regions using a machine learning technique for automated image classification, and pinpoints recognisable characteristics and patterns in facial photos of patients to determine a possible set of rare genetic disorders.

Mr Gurovich believes this simplified method of diagnosis is advantageous for clinicians.

He said: “This allows for the phenotypic [the observable characteristics of an individual] analysis to be accessed by geneticists and provides a unique reference tool, which aggregates many genetic insights into one mathematical model.”

A significant challenge for the FDNA team during the development of the app was how to build an efficient AI system in a field where access to data is limited.

Mr Gurovich said: “It is hard to obtain data periods – the algorithm has to find a way to learn and generalise from this while also adhering to a strict privacy policy.”

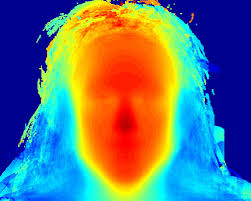

Moreover, for researchers to better understand which facial features led to its possible diagnosis, the technology illustrates the facial elements that determined the AI system’s diagnosis of diseases on a heat map.

Mr Gurovich added: “We are constantly improving this tool by adding more data and expanding our network of users that help to train it.

“We’re also working on developing ways to look at the manifestation of genetic syndromes through other biomarkers such as voice, MRIs [medical imaging] and movement.”